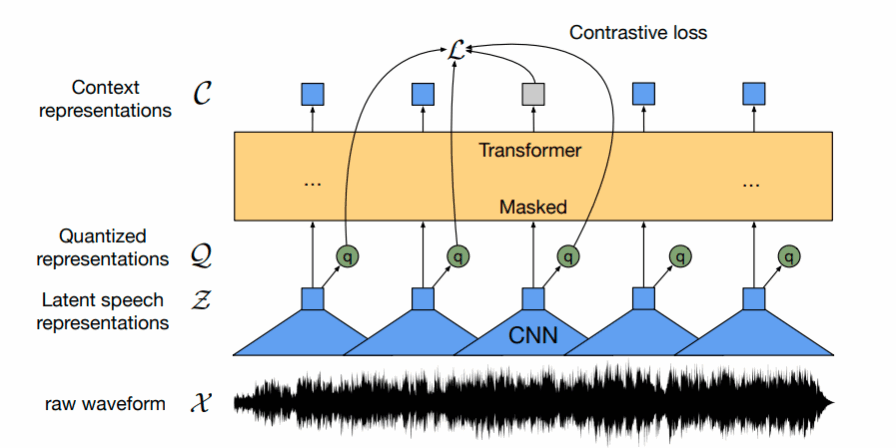

The recent advent of highly performant models in Machine Learning had a considerable impact also on automatic speech recognition (ASR) systems in general and on low-resource language in particular. Models that have been trained on thousands of hours of labeled (or unlabeled) speech are achieving today error rates that were inconceivable even ten years ago. However, while these models mainly exist for big languages (i.e. mainly English), small and low-resource languages typically were left out as the preparation of appropriate training material was too costly or too complicated due to the lack of the required high amount of text and audio data. At least since the development of self-supervised learning frameworks like wav2vec2, low-resource languages are experiencing also some considerable advancement in speech recognition and related tasks. Instead of developing an ASR system entirely from scratch for a certain small language, one can now use one of the massive multilingual self-supervised models and fine-tune them with a smaller amount of data for a specific target language.

The case in point here is Luxembourgish, for which only limited resources are available. This study will be based on Meta’s multilingual XLS-R model, a self-supervised model which has been trained on 500.000 hours of audio data for 128 languages. And fine-tuning this XLS-R model with some hours of Luxembourgish audio + matching orthographic transcription data indeed is leading to some surprisingly good recognition results. The following model has been developed during the last months, mostly by recycling some recipes from Hugging Face.

The development consisted of three steps:

1. Preparation of training material

For fine-tuning, pairs of audio files and matching transcription files are necessary. These files have a duration of maximum 10 seconds and roughly correspond with a sentence.

At present, the training material consists of 11,000 sentences.

2. Fine-tuning XLS-R with this Luxembourgish training material

Fine-tuning with this training data is intended to modify the XLS-R model to match the characteristics of the Luxembourgish phonetic structure. The training took approximately 30 hours on our HPC using four GPUs.

3. Attaching a language model

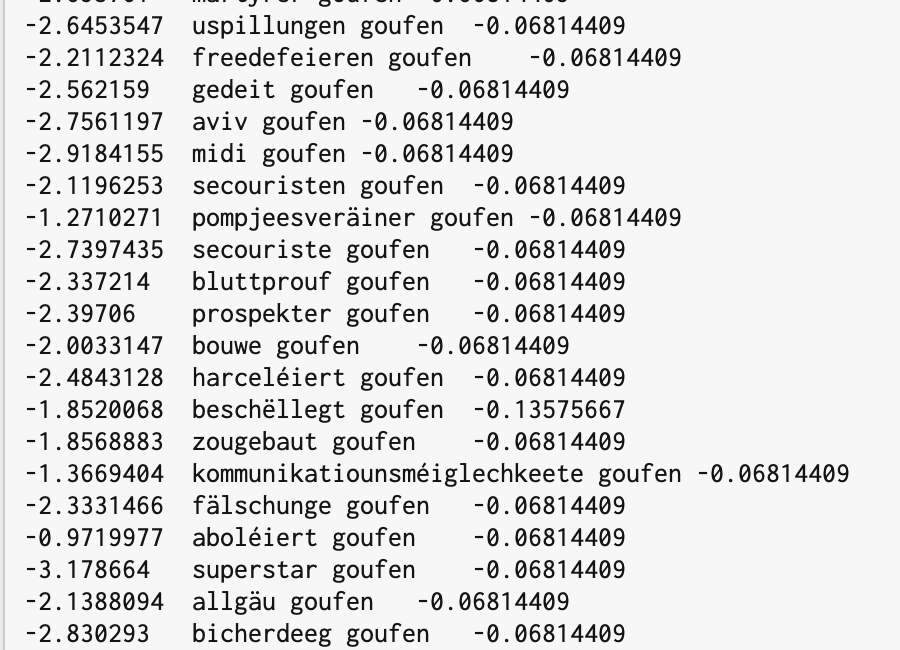

While the vocabulary of the training material obviously is still rather limited, the results of this purely acoustic recognition are often poor. In order to improve this, a language model has been attached to the acoustic model. Following this script, an ngram model has been created based on a Luxembourgish text corpus (size: ~100 million words). These ngrams are accessible during the recognition process of the wav2vec2 model and can enhance the recognition quality significantly.

Some examples

In the following, some examples will be presented with the recognized text displayed along with the ground truth of the audio sample. Although it is obvious that the results are not ready yet for a production system, it nevertheless is a promising first step and the model can be improved now easily with more data. In the future, it will be necessary to enlarge both the training data and the size of the language model.

Result: an dat elei vum terr zusummen wärenechr verschidden saachen nit passéiert

Ground truth: an dat mat de Leit vum Terrain zesummen da wäre ganz sécher verschidde Saachen nit passéiert

Result: sin och der méenug dasst fro vun der sozialer gerechtegkeet e permenenten emer am politeschen ee bas sin

Ground truth: Ech sinn och der Meenung dass d’Fro vun der sozialer Gerechtegkeet e permanenten Theema am politeschen Debat muss sinn.

Result: och wann di selwer gebake kichelcher komesch geroch un huet de gu iwwerze

Ground truth: Och wann di selwer gebake kickelcher komesch geroch hunn huet de Goût iwwerzeegt

Result: dass net ënner all réckefloss

Ground truth: ‚t ass net ënner all Bréck e Floss

Result: jo sch benotzen deen aus rock an zwar enger säi vireen ze luewen boras sou gutt respektiv am négtif hues de gesivitsaugeran es

Ground truth: jo, ech benotzen deen Ausdrock an zwar engersäits fir een ze luewen boah wat ass d’Sa gutt respekiv am Negativen hues de gesi wéi d’Sa gerannt ass

Result: a wann zenkrase gerappt huet

Ground truth: Jo, wann d’Sa eng krass gerappt huet

Availability

The current model is available through my account on Hugging Face. For own tests, it is convenient to use the ASR pipeline in a local Python environment:

from transformers import pipeline

pipe = pipeline("automatic-speech-recognition", model="pgilles/wav2vec2-large-xls-r-LUXEMBOURGISH-with-LM")

pipe(<sound file>)

If you are interested in further developing and fine-tuning this model, get in touch.